EleutherAI Releases GPT-NeoX-20B, A 20-billion-parameter AI Language

Por um escritor misterioso

Descrição

GPT-NeoX-20B, a 20-billion parameter natural language processing (NLP) AI model similar to GPT-3, has been publicly sourced

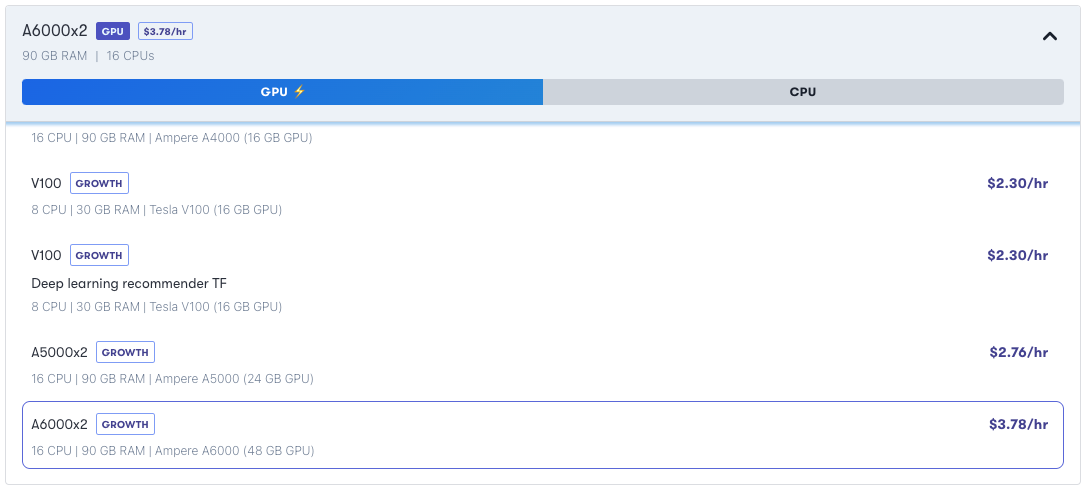

GPT-NeoX: A 20 Billion Parameter NLP Model on Gradient Multi-GPU

Meet GPT-NeoX-20B, A 20-Billion Parameter Natural Language Processing AI Model Open-Sourced by EleutherAI - MarkTechPost

PDF) GPT-NeoX-20B: An Open-Source Autoregressive Language Model

GPT-NeoX: A 20 Billion Parameter NLP Model on Gradient Multi-GPU

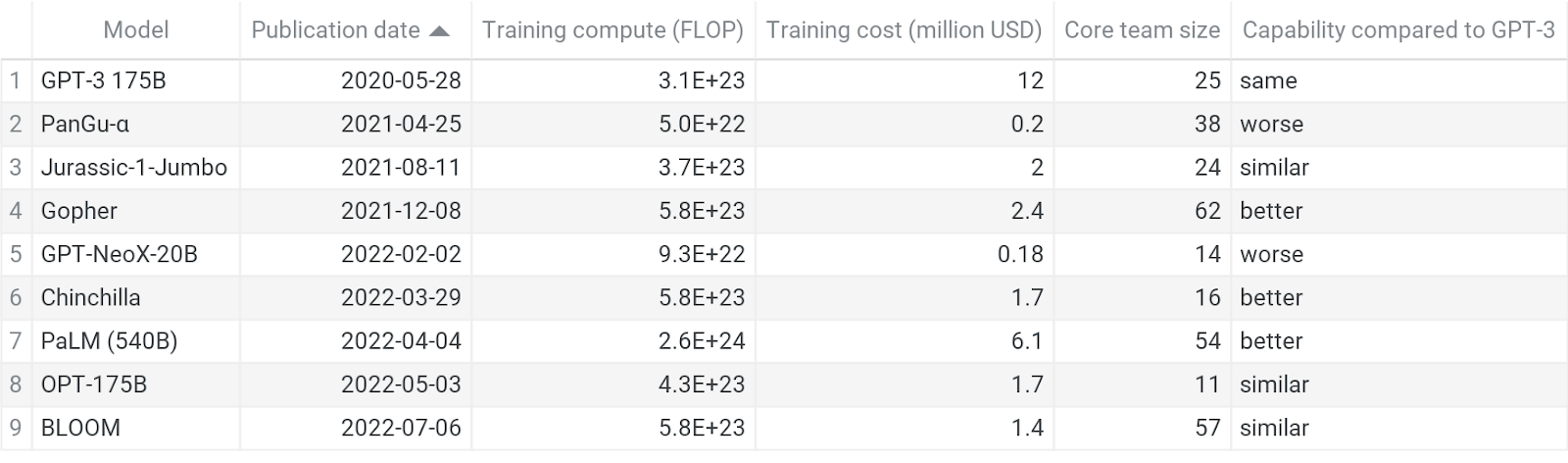

Understanding the diffusion of large language models: summary — EA Forum

Review — GPT-NeoX-20B: An Open-Source Autoregressive Language Model, by Sik-Ho Tsang

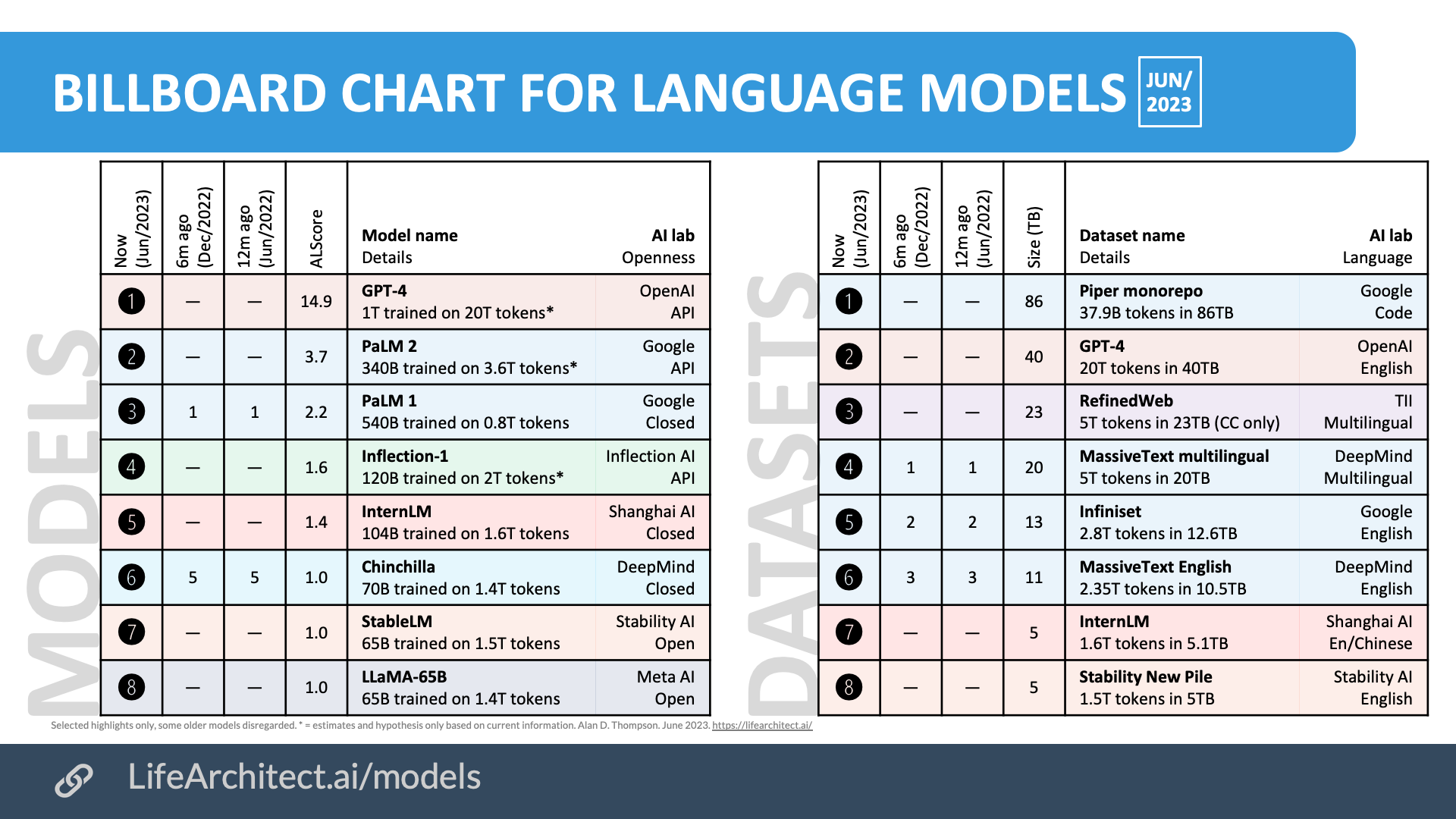

Inside language models (from GPT to Olympus) – Dr Alan D. Thompson – Life Architect

Cerebras-GPT: A Family of Open, Compute-efficient, Large Language Models - Cerebras

N] EleutherAI announces a 20 billion parameter model, GPT-NeoX-20B, with weights being publicly released next week : r/MachineLearning

Open AI / GPT-3 Presentation [Currently Obsessed]

GPT-NeoX

Transformer models: an introduction and catalog — 2023 Edition - AI, software, tech, and people. Not in that order. By X

EleutherAI Open-Sources 20 Billion Parameter AI Language Model GPT-NeoX-20B

EleutherAI Open-Sources 20 Billion Parameter AI Language Model GPT-NeoX-20B

de

por adulto (o preço varia de acordo com o tamanho do grupo)